10. Improvement¶

With our LLM prompt showing such strong results, you might be content to leave it as it is. But there are always ways to improve, and you might come across a circumstance where the model’s performance is less than ideal.

10.1. Learn from your model’s mistakes¶

One common tactic is to examine your model’s misclassifications and tweak your prompt to address any patterns they reveal.

One simple way to do this is to merge the LLM’s predictions with the human-labeled data and take a look at the discrepancies with your own eyes.

First, merge the LLM’s predictions with the human-labeled data.

comparison_df = llm_df.merge(

sample_df, on="payee", how="inner", suffixes=["_llm", "_human"]

)

Then filter to cases where the LLM and human labels don’t match.

mistakes_df = comparison_df[

comparison_df.category_llm != comparison_df.category_human

]

Looking at the misclassifications, you might notice that the LLM is struggling with a particular type of business name. You can then adjust your prompt to address that specific issue.

mistakes_df.head(10)

| | payee | category_llm | category_human |

|----:|:---------------------------------|:---------------|:-----------------|

| 16 | SOTTOVOCE MADERO | Restaurant | Other |

| 43 | SIBIA CAB | Bar | Other |

| 56 | THE OVAL ROOM | Bar | Restaurant |

| 85 | ELLA DINNING ROOM | Restaurant | Other |

| 87 | LAKELAND VILLAGE | Hotel | Other |

| 95 | THE PALMS | Bar | Restaurant |

| 104 | GRUBHUB, INC. | Other | Restaurant |

| 136 | NORTHERN CALIFORNIA WINE COUNTRY | Bar | Other |

| 144 | MAYAHUEL | Bar | Restaurant |

| 146 | TWENTY EIGHT | Bar | Other |

You could then output the mistakes to a spreadsheet and inspect them more closely.

mistakes_df.to_csv("mistakes.csv", index=False)

As I scanned the full list, I observed that the LLM was struggling with businesses that had both the word bar and the word restaurant in their name. A simple fix would be to add a new line to your prompt that instructs the LLM what to do in that case.

If a business name contains both the word "Restaurant" and

the word "Bar", you should classify it as a Restaurant.

10.2. Be humble, human¶

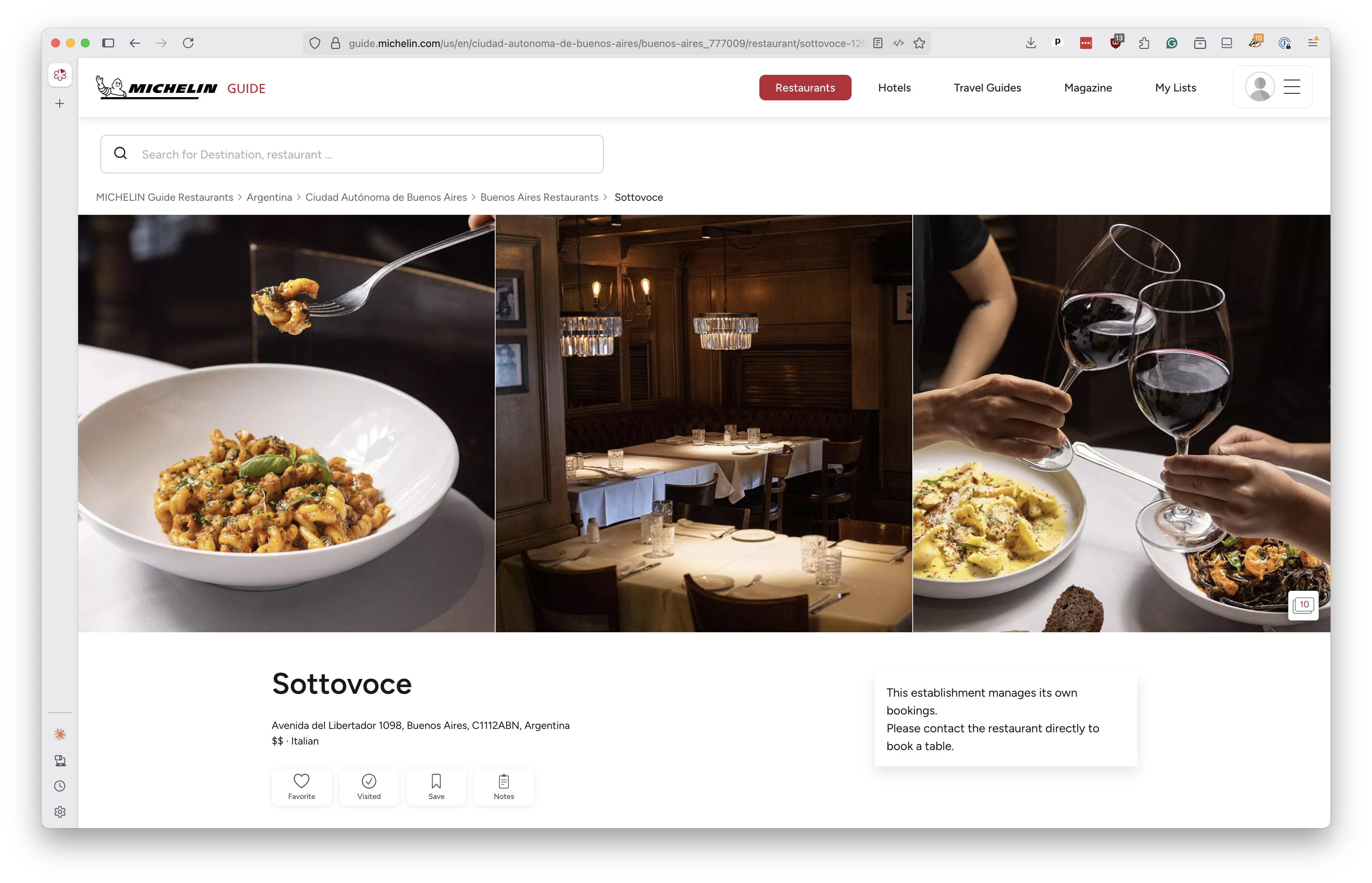

Look closely at the misclassifications above and you’ll see another interesting example. The first entry, “SOTTOVOCE MADERO,” was classified as a restaurant by the LLM but labeled as “Other” by the human. According to our evaluation routine, the LLM got it wrong.

But a quick Google search will reveal that Sottovoce is indeed a restaurant, found in the Madero Center neighborhood of Buenos Aires.

So, in this case, the LLM was actually correct and the human label was wrong, a truly humbling moment for the creators of this class. And a reminder of how powerful LLMs can be at understanding and classifying data, even when the information is incomplete or ambiguous.

10.3. Use training data as few-shot prompts¶

Earlier in the lesson, we showed how you can feed the LLM examples of inputs and output prior to your request as part of a “few shot” prompt. An added benefit of coding a supervised sample for testing is that you can also use the training slice of the set to prime the LLM with this technique. If you’ve already done the work of labeling your data, you might as well use it to improve your model as well.

Converting the training set you held to the side into a few-shot prompt is a simple matter of formatting it to fit your LLM’s expected input. Here’s how you might do it in our case.

def get_fewshots(training_input, training_output, batch_size=5):

"""Convert the training data into a few-shot prompt"""

# Create a list to hold the formatted few-shot examples

fewshot_list = []

# Loop through the batches of input and output

for input_list, output_list in zip(

batched(training_input.payee, batch_size),

batched(training_output, batch_size),

):

# Add a "user" message and the expected "assistant" response

fewshot_list.extend([

{"role": "user", "content": "\n".join(input_list)},

{"role": "assistant", "content": PayeeList(answers=list(output_list)).model_dump_json()},

])

# Return the list of few-shot examples, one for each batch

return fewshot_list

Pass in your training data.

fewshot_list = get_fewshots(training_input, training_output)

Take a peek at the first pair to see if it’s what we expect.

fewshot_list[:2]

[

{

"role": "user",

"content": "UFW OF AMERICA - AFL-CIO\nRE-ELECT FIONA MA\nELLA DINNING ROOM\nMICHAEL EMERY PHOTOGRAPHY\nLAKELAND VILLAGE\nTHE IVY RESTAURANT\nMOORLACH FOR SENATE 2016\nBROWN PALACE HOTEL\nAPPLE STORE FARMERS MARKET\nCABLETIME TV",

},

{

"role": "assistant",

"content": '{"answers": ["Other", "Other", "Other", "Other", "Other", "Restaurant", "Other", "Hotel", "Other", "Other"]}',

},

]

Return to classify_payees and replace the previous hardcoded prompt with the generated few-shot list.

response = client.chat.completions.create(

messages=[

{

"role": "system",

"content": prompt,

},

*get_fewshots(training_input, training_output),

{

"role": "user",

"content": "\n".join(name_list),

},

],

model=model,

response_format={

"type": "json_schema",

"json_schema": {

"name": "PayeeList",

"schema": PayeeList.model_json_schema()

}

},

temperature=0,

)

And all you need to do is run it again.

llm_df = classify_batches_parallel(

test_input.payee,

model="meta-llama/Llama-4-Maverick-17B-128E-Instruct-FP8",

)

How can you tell if your results have improved? You run that same classification report and see if the numbers have gone up.

print(classification_report(test_output, llm_df.category))

Repeating this disciplined, scientific process of prompt refinement, testing and review can, after a few careful cycles, gradually improve your prompt to return even better results.